March 17, 2026

The first part of this two-part article described a verification crisis driven by the rapid acceleration of AI‑generated disinformation. By 2026, disinformation campaigns have evolved from human‑crafted narratives into automated, multimodal, and coordinated AI systems capable of manipulating public opinion, destabilizing institutions, and contaminating AI training pipelines – effectively eroding trust in digital content.

Three converging domains now define the digital disinformation landscape:

AI-Driven Disinformation – AI enables unprecedented velocity and scale. Deepfake incidents have surged, bot traffic exceeds human traffic, and AI-coordinated influence operations are now a strategic instrument of statecraft and politics at every level. AI swarms—autonomous, adaptive synthetic personas—represent the most advanced threat as they troll the Internet to push a specific agenda.

Provenance Infrastructure – Reproducible provenance (C2PA, watermarking, cryptographic signatures) is emerging as foundational security infrastructure. Provenance prevents data poisoning, enables traceability, and restores trust in digital media.

AI Security – AI systems are both targets and tools of attack which can individually lead to subsequent disinformation creation and deployment. Threats include data poisoning, adversarial manipulation, model inversion, and synthetic identity generation. Security‑first AI governance and authentic-by-design approaches are now essential.

This Part 2 of a two-part article provides recommendations on how disinformation may be combatted and describes a unified architecture that combines provenance verification, multimodal deepfake detection, behavioral analysis, AI‑security controls, and intervention strategies to provide a strong defense. An ecosystem approach treats disinformation as a systemic risk requiring cross‑disciplinary and cross-national coordination of disinformation intelligence. A personalized AI filter helps to improve cognitive defenses when confronted with new information.

Countering AI-Driven Disinformation: Soft Power and Technical Countermeasures

Part 1 of this article demonstrated that AI-driven disinformation represents a systemic challenge within modern information ecosystems. Advances in generative AI, automated influence systems, and synthetic media technologies have significantly expanded the capacity of malicious actors to manipulate digital discourse. However, a growing body of interdisciplinary research suggests that the risks associated with disinformation can be mitigated through coordinated interventions that combine technical safeguards, institutional governance, and societal resilience.

Effective countermeasures must address both the supply side of disinformation—where misleading or fabricated content is generated and distributed—and the demand side, which includes the social, psychological, and institutional conditions that allow such content to gain traction among audiences. Consequently, contemporary research increasingly emphasizes a multilayered defense strategy that integrates technological detection systems with broader social and regulatory responses.

Cognitive Defenses

A number of large-scale research syntheses have examined the effectiveness of different approaches to mitigating disinformation. Reviews conducted by organizations such as the Carnegie Endowment for International Peace, the RAND Corporation, the International Panel on the Information Environment, and the Union of Concerned Scientists identify several consistent findings regarding the most effective cognitive intervention strategies.

One of the most widely supported approaches is pre-bunking, also referred to as cognitive inoculation. Unlike traditional fact-checking, which attempts to correct misinformation after it has already spread, pre-bunking exposes individuals to common manipulation techniques before they encounter disinformation in real-world contexts. Experimental studies suggest that short educational interventions—such as explanatory videos, interactive games, or narrative warnings about manipulation tactics—can significantly reduce the likelihood that individuals will later accept or share misleading content.

Closely related to pre-bunking are accuracy nudges, which prompt users to consider the reliability of information before sharing it. Even simple prompts asking users whether they believe a piece of content is accurate have been shown to reduce the spread of misinformation on social media platforms. These interventions are particularly effective when integrated into platform design, as they introduce small friction points that encourage more deliberate information-sharing behavior.

Despite the growing emphasis on preventive approaches, fact-checking systems remain an important component of the information integrity ecosystem. Independent fact-checking organizations analyze disputed claims, provide evidence-based assessments, and disseminate corrections through both traditional media and digital platforms. Research suggests that fact-checking is most effective when corrections are delivered rapidly, supported by transparent evidence, and communicated by trusted messengers. Global networks of fact-checking organizations coordinated through initiatives such as the International Fact-Checking Network play a critical role in maintaining the credibility and consistency of these efforts. For example, media bias is tracked by several organizations of the network with Allsides and FactCheck as two leaders in this space. These websites aim to provide information about the bias and reliability of various media sources and other fact checking organizations.

These studies and institutional capabilities collectively highlight the importance of integrating human-centered approaches with technological solutions. While AI systems can detect and flag suspicious content, the ultimate effectiveness of countermeasures often depends on the ability of individuals and communities to interpret and respond to information critically.

National and Institutional Counter-Disinformation Strategies

Governments around the world have increasingly recognized disinformation as a national security and public governance issue. As a result, many countries have begun developing institutional frameworks designed to coordinate responses across government agencies, technology platforms, and civil society organizations.

For example, Ireland has established a National Counter Disinformation Strategy aimed at strengthening election integrity, improving coordination among government agencies, and promoting media literacy initiatives. Similarly, the United Kingdom’s Counter Disinformation Unit monitors emerging narratives, collaborates with digital platforms, and supports responses to disinformation related to elections, public health, and national security.

In the United States, counter-disinformation efforts are distributed across multiple agencies, including the Department of Homeland Security, the Cybersecurity and Infrastructure Security Agency, and intelligence community organizations responsible for monitoring foreign influence operations. Within the European Union, regulatory initiatives such as the Digital Services Act have introduced comprehensive requirements for large digital platforms to assess and mitigate systemic risks associated with algorithmic content distribution.

The Digital Services Act represents one of the most ambitious regulatory frameworks currently in operation. Among its provisions are requirements for algorithmic transparency, independent audits of platform risk management practices, and enhanced data access for researchers studying online information ecosystems. These regulatory mechanisms aim to create structural incentives for platforms to address the spread of harmful or manipulated content while preserving the open nature of digital communication networks.

Civil Society and Soft Power Interventions

Beyond government initiatives, civil society organizations play a critical role in strengthening societal resilience against disinformation. Research from institutions such as the Foreign Policy Research Institute emphasizes the importance of soft power strategies that address the underlying social dynamics of information manipulation.

Soft power interventions typically focus on strengthening the informational resilience of communities rather than directly suppressing misleading content. These approaches may include strategic communication campaigns, public awareness initiatives, community-based media literacy programs, and the promotion of trusted information sources. By improving the public’s ability to evaluate information critically, such initiatives reduce the likelihood that disinformation campaigns will achieve their intended influence.

Counter-narrative strategies also play an important role in this context. Rather than simply correcting false claims, counter-narratives provide alternative interpretations that reinforce accurate information while addressing the emotional or ideological motivations that may make disinformation appealing to certain audiences.

Technical Countermeasures: From Content Analysis to Behavioral Detection

The growing sophistication of AI-driven disinformation campaigns has prompted the development of increasingly advanced technical countermeasures. Early efforts to detect misinformation focused primarily on evaluating the factual accuracy of individual pieces of content. However, modern influence operations frequently combine authentic information, manipulated media, and coordinated amplification strategies, making content-based detection alone insufficient. As a result, contemporary research emphasizes a broader set of detection techniques that analyze not only the characteristics of digital content but also the behavioral patterns and network structures through which information spreads.

Artificial intelligence plays a central role in these defensive strategies. Machine learning systems are capable of processing large volumes of digital data across multiple platforms, enabling real-time identification of suspicious patterns in both media content and user behavior. When integrated with human oversight and transparent governance frameworks, these technologies provide scalable mechanisms for detecting and mitigating coordinated influence operations.

Three categories of technical countermeasures are particularly prominent in current research: multimodal deepfake detection, behavioral and network-level analysis, and linguistic forensic modeling. Each approach targets different stages of the disinformation lifecycle and contributes to a multilayered defense architecture.

Multimodal Deepfake Detection

Synthetic media technologies have advanced rapidly in recent years, making it increasingly difficult for human observers to distinguish manipulated content from authentic recordings. Deepfake videos and audio clips can convincingly imitate the appearance and voice of public figures, enabling impersonation attacks that may be used for political manipulation, financial fraud, or reputational damage. Because these technologies often combine multiple forms of media—including facial imagery, voice synthesis, and contextual language—effective detection requires the integration of several analytical techniques.

Multimodal deepfake detection systems analyze multiple signals within digital media simultaneously. Visual analysis techniques examine facial micro-expressions, lighting consistency, and geometric relationships between facial features. Convolutional neural networks trained on large datasets of manipulated media can identify subtle artifacts introduced during the synthesis process.

Temporal analysis methods focus on inconsistencies in motion patterns across video frames. Recurrent neural networks and transformer-based models can analyze sequences of frames to identify irregularities in facial movement, blinking patterns, or lip synchronization that may indicate synthetic manipulation.

Audio analysis techniques evaluate speech characteristics such as frequency patterns, spectral signatures, and vocal timing. Synthetic voice generation systems sometimes produce subtle artifacts that differ from natural speech patterns, allowing detection algorithms to identify potential manipulation.

Finally, cross-modal consistency checks compare different elements of multimedia content to ensure that audio, video, and contextual information align with one another. For example, systems may analyze whether lip movements correspond accurately to the spoken audio track or whether facial expressions are consistent with the emotional tone of the speech.

The following table provides a summary of some high-confidence methods and tools used for deep fake detection:

| Detection Category | Techniques |

| Visual artifacts | CNN analysis |

| Temporal signals | RNN analysis |

| Physiological signals | blink patterns |

| Audio analysis | spectral fingerprinting |

| Cross-modal analysis | lip sync consistency |

By integrating these analytical techniques, multimodal detection systems can identify manipulated media even when individual signals appear authentic. However, as generative models continue to improve, detection systems must evolve continuously to keep pace with advances in synthetic media generation.

Behavioral and Network-Level Detection

Although content-based detection methods are important, many modern disinformation campaigns rely on coordinated amplification strategies rather than purely fabricated media. In such cases, the content itself may appear authentic, but the pattern of distribution and engagement reveals underlying manipulation. Behavioral and network-level detection methods address this challenge by analyzing how information spreads across digital platforms.

Behavioral detection systems examine patterns such as posting frequency, timing regularity, interaction networks, and account coordination. Automated influence operations often produce statistical patterns that differ from those generated by organic user activity. For example, clusters of accounts may consistently post similar messages within very short time intervals or amplify one another’s content through synchronized interactions.

Network analysis techniques are particularly useful for identifying coordinated campaigns. Graph-based models can map relationships among accounts, identifying clusters that exhibit unusually dense patterns of interaction or shared narrative amplification. These techniques can reveal networks of accounts that collaborate to propagate specific messages, even when individual posts appear authentic.

Another promising approach involves propagation modeling, which examines how narratives spread through social networks over time. By analyzing the structure and velocity of information cascades, researchers can identify patterns associated with coordinated influence campaigns. For instance, artificial amplification often produces unusually rapid propagation across multiple network clusters compared with typical organic information diffusion.

Behavioral detection methods are especially important for identifying AI-driven influence systems, including coordinated networks of automated agents or synthetic personas. Because such systems may generate linguistically sophisticated content, detecting them requires identifying coordination signals that reveal underlying automation.

Linguistic Forensic Analysis

A third category of technical countermeasures focuses on analyzing linguistic patterns within digital communications. Linguistic forensic methods draw on insights from computational linguistics, psychology, and communication theory to identify features associated with manipulated or coordinated messaging.

One widely used approach involves psycholinguistic profiling, which analyzes patterns in language usage to identify stylistic or cognitive characteristics associated with deceptive communication. Tools such as Linguistic Inquiry and Word Count (LIWC) analyze features including emotional tone, pronoun usage, syntactic complexity, and cognitive processing indicators.

Frameworks such as Communication Accommodation Theory and Information Manipulation Theory provide theoretical foundations for analyzing how language may be strategically adapted to influence audiences. For example, coordinated propaganda campaigns may exhibit distinctive linguistic patterns, such as heightened emotional language, simplified narrative structures, or consistent framing strategies across multiple accounts.

Machine learning models trained on large corpora of authentic and manipulated text can identify these patterns automatically. Natural language processing systems can detect subtle indicators of automated content generation or coordinated messaging, including repetitive phrasing, unusual lexical distributions, or stylistic inconsistencies across accounts.

However, linguistic detection methods face increasing challenges as generative language models improve. Advanced AI systems are capable of producing highly diverse and contextually appropriate language, reducing the reliability of simple stylometric indicators. As a result, linguistic analysis is most effective when combined with behavioral and network-level detection techniques.

Toward Integrated Technical Defense Systems

Each category of technical countermeasures—content analysis, behavioral detection, and linguistic forensics—addresses different aspects of the disinformation problem. Content-based detection systems are effective for identifying manipulated media such as deepfakes. Behavioral analysis reveals coordinated influence operations that rely on amplification rather than fabrication. Linguistic analysis can uncover subtle indicators of coordinated messaging strategies.

Individually, however, these approaches have limitations. Content-based detection may fail when adversaries distribute authentic but misleading material. Behavioral detection requires large-scale data access and may be constrained by platform privacy policies. Linguistic analysis becomes less reliable as generative AI models produce increasingly natural language.

Consequently, many researchers advocate the integration of these techniques into multi-layer detection architectures that combine signals from multiple analytical domains. By correlating content-based indicators with behavioral patterns and linguistic features, integrated systems can generate more reliable assessments of information authenticity and influence activity.

Such architectures form the technical foundation for broader disinformation defense frameworks. When combined with provenance verification systems, AI security controls, and human oversight and threat intelligence mechanisms, these technologies enable the creation of resilient information ecosystems capable of detecting and mitigating coordinated influence operations at scale.

Provenance and Identity as Foundational Infrastructure for Defense Frameworks

Provenance is becoming a core security layer for AI systems, analogous to TLS for web traffic. Its functions include:

- verifying authenticity of training data,

- preventing contamination of AI models,

- enabling traceability of synthetic media,

- supporting regulatory compliance and auditability.

Without provenance, AI systems may expose training to adversarial content, creating feedback loops of disinformation.

Reproducible provenance—cryptographically verifiable metadata documenting the origin, transformation, and chain‑of‑custody of digital assets—is essential for restoring trust in digital content. Systems such as:

- C2PA (Coalition for Content Provenance and Authenticity),

- cryptographic watermarking,

- secure metadata chains / Software Bill of Materials (SBOM) / Blockchains

- Decentralized Identity

provide cryptographic mechanisms for distinguishing authentic content and identities from manipulated media, identity, and code.

The C2PA provides an open technical standard for publishers, creators and consumers to establish the origin and edits of digital content. It’s called Content Credentials, and it ensures content complies with standards as the digital ecosystem evolves. Some key founders and users of this technology include Adobe, Microsoft, BBC, OpenAI, Meta, and the New York Times among many others.

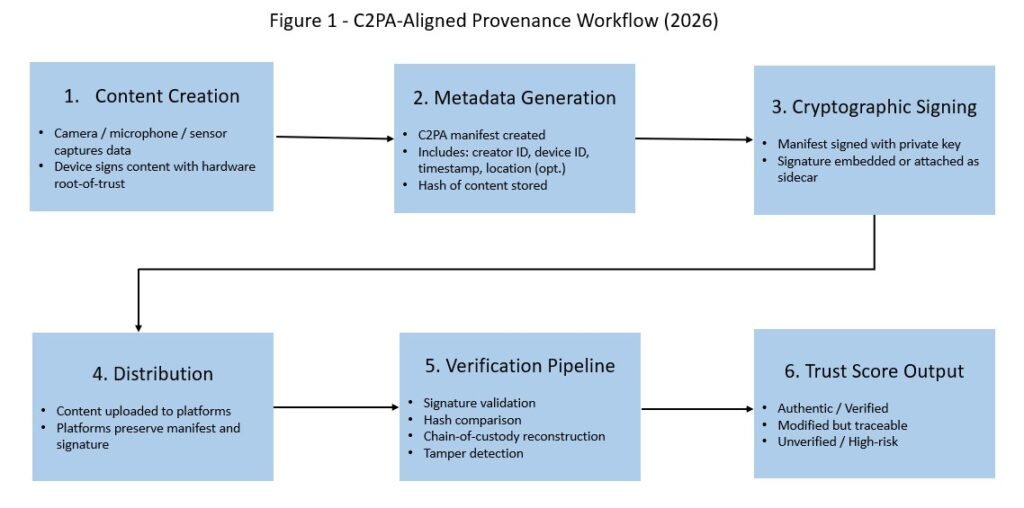

Figure 1 depicts a workflow on how C2PA could be used as a provenance tool to track the authenticity of content.

Implementing blockchain technology can also help to verify the authenticity and provenance of videos at the source and can help ensure content has not been altered. One example of a blockchain technology that is designed for content authenticity is WordProof. WordProof was founded to combat online issues like distrust, plagiarism, and fake news through blockchain technology. Their solution, the WordProof Timestamp Ecosystem, empowers users to build trust online.

SBOMs give you a detailed inventory of software components, making it easier to identify vulnerabilities quickly. Digital signatures confirm the authenticity and integrity of components, preventing tampering. Combining a SBOM with a cryptographic signature creates a transparent, trustworthy software supply chain and helps you manage risks to AI code proactively.

Decentralized identity is also a related cryptographically-based technology that can help reduce the creation and spread of disinformation. Identity refers to being an individual, i.e., a distinct human entity. Identity could also refer to non-human entities, such as an organization or authority or even, in particular, AI agents.

Agents interact with other agents, APIs, and systems in intricate, often cascading ways, leading to complex chains of delegated authority. This creates a profound accountability challenge, as delegated authority can cascade through multiple agents, obscuring responsibility if not managed appropriately. If a single compromised agent can autonomously delegate its (potentially elevated) permissions to a multitude of other agents, tracing the origin and scope of a malicious action becomes incredibly difficult, resulting in potentially untraceable malicious activities.

AI agents need a unique cryptographically bound identity to operate effectively and securely within and across many different digital environments. A decentralized identity, along with verifiable credentials, is essential for authentication, authorization, and accountability for agents for these different environments.

Spoofing identities or creating synthetic identities is at the heart of a disinformation campaign. Requiring cryptographically signed identities, such as by using Decentralized Identity systems which are built on public blockchains like Ethereum, can provide accountability for actions while preserving privacy and allowing individuals to manage their own identity-related information. With decentralized identity solutions, a human or non-human entity (agent) can create identifiers and claim and hold their attestations without relying on central authorities, like service providers or governments. Frameworks like the Model Context Protocol (MCP) and the proposed Agent Name Service (ANS) are being developed to manage and secure these identities across various AI systems. Zero trust principles, decentralized identifiers, and verifiable credentials also need to be applied to these frameworks to ensure AI agents maintain a secure state over their life cycle.

Trust Scores and Reputation Systems

Trust scores and reputation systems can be essential soft power and technical tools to curb the spread of disinformation.

Trust Scores measure the degree of confidence an entity places in another entity or information source. Trust is context-specific and often directional (A trusts B to perform X reliably). Trust scores quantify perceived reliability, integrity, or competence based on past interactions, behavior, or observable cues, such as expertise.

Trust scores are a function of multiple evaluated parameters such as:

-

- provenance verification,

- source reputation,

- behavioral anomaly score,

- cross-platform propagation pattern,

- fact-check signals.

Reputation Systems aggregate historical feedback, peer feedback and endorsement ratings, or achievements to represent an entity’s overall credibility and past performance. Reputation is dynamic, reflecting both long-term patterns and recent behavior in context. Amazon seller ratings are an example of a reputation measure.

These systems are particularly relevant in distributed online environments where actors cannot directly verify each interaction.

Some examples on how they are applied include:

Online Media and News Platforms

- Assign trust scores to content creators or news outlets.

- Highlight high-reputation sources while downranking low-credibility originators.

- Enable users to make “informed” decisions about consumption and sharing.

Social Networks

- Detect coordinated inauthentic behavior or spam by weighting user actions against reputation.

- Implement badges or verification (e.g., LinkedIn verification, Reddit karma, Airbnb Superhost) to signal trustworthy actors.

Cybersecurity and IoT

- Evaluate device or node trustworthiness in networked systems, preventing malicious content injections.

- Support automated decision-making when humans must rely on distributed information.

Coordination with Artificial Intelligence

- AI systems can aggregate multi-source trust cues, identify anomalous behavior, and predict the reliability of emerging content in real-time.

- Probabilistic and graph-based reasoning allow the system to dynamically update trust as new evidence accrues.

AI agentic systems also depend on trust and reputation systems. Finding reliable partners to interact with in open environments is a challenging task for software agents, and trust and reputation mechanisms are used to handle this issue, effectively acting as sentinels in agent interactions, detecting and blocking users trying to abuse the trust system, as well as discovering trusted agents to collaborate on tasks.

Risk‑Ranked Threat / Technical Mitigation Table

The following table lists some of the top technical threats and associated mitigation measures for influence operations.

| Rank | Threat Category | Description | Impact | Technical Mitigation |

| 1 | AI Swarms & Coordinated Synthetic Personas | Autonomous agents that mimic human behavior and coordinate influence operations | Severe | Behavioral detection, network analysis |

| 2 | Deepfake‑Driven Sabotage | Synthetic audio/video used for impersonation, fraud, political disruption | Severe | Multimodal deepfake detection, provenance |

| 3 | Training Data Contamination | Poisoned or manipulated data entering AI training pipelines | High | Provenance, secure training, model integrity |

| 4 | Cross‑Platform Narrative Amplification | Coordinated spread across social, messaging, and fringe platforms | High | Propagation graph modeling |

| 5 | Metadata Stripping & Provenance Evasion | Attackers removing or altering provenance metadata | High | C2PA enforcement, cryptographic signatures |

| 6 | Adversarial Prompting & Model Manipulation | Attempts to coerce AI systems into harmful outputs | Medium | Adversarial input filtering |

| 7 | Synthetic Identity Ecosystems | AI‑generated personas used for fraud or influence | Medium | Decentralized identity, behavioral analysis, cross-source validation |

| 8 | Low‑Quality Synthetic Content Flooding (Astroturfing) | Volume‑based noise to overwhelm verification systems | Medium | Automated triage / throttling, AI detection, trust scoring |

| 9 | LLM-generated microtargeted propaganda

|

Highly personalized, persuasive content that manipulates individuals or groups | Medium | Data protection, AI content detection, watermarking, behavioral analysis, and transparency mechanisms |

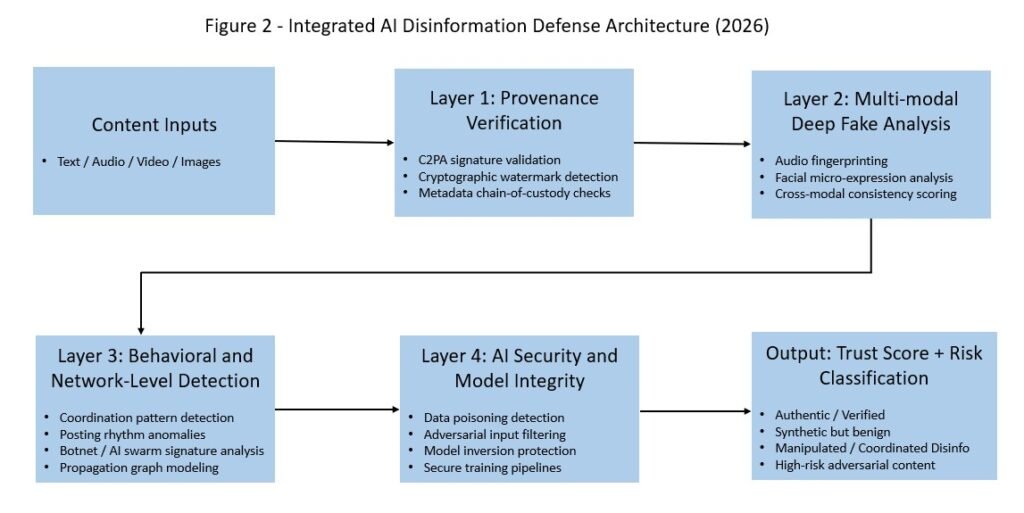

The mitigation measures discussed above can be combined into a multi-layer technical defense architecture as shown in Figure 2. Content to be checked for disinformation or misinformation, (text, audio, video, images) is input to a pipeline of technical countermeasures and checks. Layer 1 checks the provenance of the content. The content is then passed through several other layers of checks and countermeasures, resulting in a trust score. The Trust Score can then be used to inform human-centered resilience strategies such as soft power and community-based interventions.

This architecture is not merely reactive. It is proactive, adaptive, and resilient — capable of countering the next generation of AI‑driven malign influence and evolving as adversaries evolve. It also introduces trust-score outputs as a decision interface for human-centered resilience. Getting global standards around the details of this trust scoring process and how trust scores are defined are important steps towards a non-partisan framework for sharing timely disinformation threat information among different platforms and regulatory bodies.

Malign Influence Kill Chains and Counter‑Influence Frameworks

As AI‑driven disinformation becomes more automated, adaptive, and scalable, defenders increasingly rely on structured kill chains and counter‑influence frameworks to map, disrupt, and pre‑empt adversarial operations. These models provide a systematic way to understand how malign influence campaigns are planned, deployed, and amplified — and where defensive interventions can be most effective. Integrating these frameworks with provenance systems and AI‑security controls strengthens the resilience of democratic institutions and information ecosystems.

The MITRE Malign Influence Kill Chain, developed in partnership with the U.S. Office of the Director of National Intelligence and the Department of Homeland Security, provides one of the most comprehensive models for understanding adversarial influence operations. It breaks malign influence into sequential stages — from actor preparation to narrative deployment to audience manipulation — and identifies opportunities for defenders to intervene at each step.

A core principle of the MITRE model is the importance of identifying genuine and authentic voices throughout the chain. By elevating authentic discourse and exposing inauthentic manipulation, defenders can diminish the adversary’s intended psychological, political, or social effects.

When integrated with AI‑driven detection systems and provenance verification, the MITRE kill chain becomes even more powerful:

- Provenance metadata can disrupt early‑stage content fabrication.

- Behavioral detection can expose coordinated amplification.

- AI‑security controls can prevent model manipulation that feeds later stages of the chain.

This alignment turns the kill chain from a descriptive model into an actionable defense architecture.

The DISARM Framework (Defending Integrity Against Strategic Adversarial Manipulation) is an open‑source, community‑maintained master framework designed to unify global efforts against influence operations. DISARM provides:

- a shared taxonomy of tactics, techniques, and procedures (TTPs),

- a structured methodology for analyzing influence campaigns,

- a common language for governments, researchers, and platforms.

DISARM’s modular structure mirrors cybersecurity frameworks like MITRE ATT&CK, but is tailored to information manipulation. It covers the full lifecycle of influence operations, including:

- actor intent,

- narrative construction,

- audience targeting,

- amplification strategies,

- platform exploitation,

- psychological manipulation.

When combined with provenance systems such as C2PA, DISARM enables defenders to trace not only what content is manipulated, but how and why it propagates.

The FERMI project (Fighting Disinformation) extends kill chain thinking into the European context, emphasizing:

- early detection of narrative seeding,

- cross‑platform tracking,

- forensic reconstruction of disinformation flows.

FERMI’s kill chain model highlights the importance of pre‑narrative analysis — identifying the ideological, geopolitical, or economic motivations that precede content creation. Pre-narrative analysis can lead to behavioral signs of narrative seeding and synthetic consensus: adversaries increasingly use AI to generate narratives before deploying them.

The Center for Security and Emerging Technology (CSET) introduced a disinformation kill chain that focuses on the technical and operational aspects of modern influence operations. It emphasizes analysis of:

- data collection,

- content generation,

- distribution infrastructure,

- amplification mechanisms,

- feedback loops.

CSET’s model is particularly relevant to AI‑driven disinformation because it explicitly accounts for:

- automated content generation,

- synthetic persona management,

- algorithmic exploitation,

- model‑driven narrative optimization.

Integrating CSET’s kill chain with AI‑security controls helps prevent adversaries from poisoning training data, manipulating recommender systems, or exploiting model vulnerabilities.

The OODA loop (Observe–Orient–Decide–Act), originally developed for military decision‑making, has been widely adopted in counter‑disinformation strategy. Influence operations are dynamic, adaptive, and iterative — and defenders must respond in real time.

AI‑driven detection systems accelerate the defender’s OODA loop:

- Observe: Multimodal detection and provenance signals

- Orient: Behavioral and network‑level analysis

- Decide: Automated risk scoring and triage

- Act: Content labeling, demotion, or counter‑messaging

The faster the defender’s loop, the more effectively they can disrupt adversarial influence cycles.

ARAC International’s Counter Malign Influence Division represents a growing trend: private‑sector organizations building dedicated counter‑influence capabilities. ARAC focuses on:

- media literacy,

- cybersecurity awareness,

- content analysis,

- counter‑disinformation operations,

- insider threat awareness,

- election integrity.

ARAC’s counter-influence framework reflects a broader shift toward whole‑of‑society defense, where governments, civil society, and private organizations collaborate to counter malign influence.

ARAC’s emphasis on media literacy and insider threat awareness complements the technical defenses described earlier. Provenance systems and AI‑security controls can detect manipulation, but human‑centered resilience is essential for long‑term stability.

Integrating Kill Chains with Technical Countermeasures and Soft Power

Kill chain models and influence frameworks become significantly more powerful when integrated with the technical countermeasures and soft power approaches discussed earlier. This integration enables defenders to:

- detect manipulation and development of narratives earlier,

- disrupt amplification pathways and synthetic consensus,

- attribute adversarial activity,

- prevent training data contamination,

- accelerate response cycles to AI swarms.

The following table provides an example of what countermeasures may be applied at each kill chain stage.

| Kill Chain Stage | Countermeasure |

| Narrative Creation | Provenance watermarking |

| Persona Creation | Identity verification |

| Distribution | Behavioral detection |

| Amplification | Network analysis |

| Audience Manipulation | Pre-bunking |

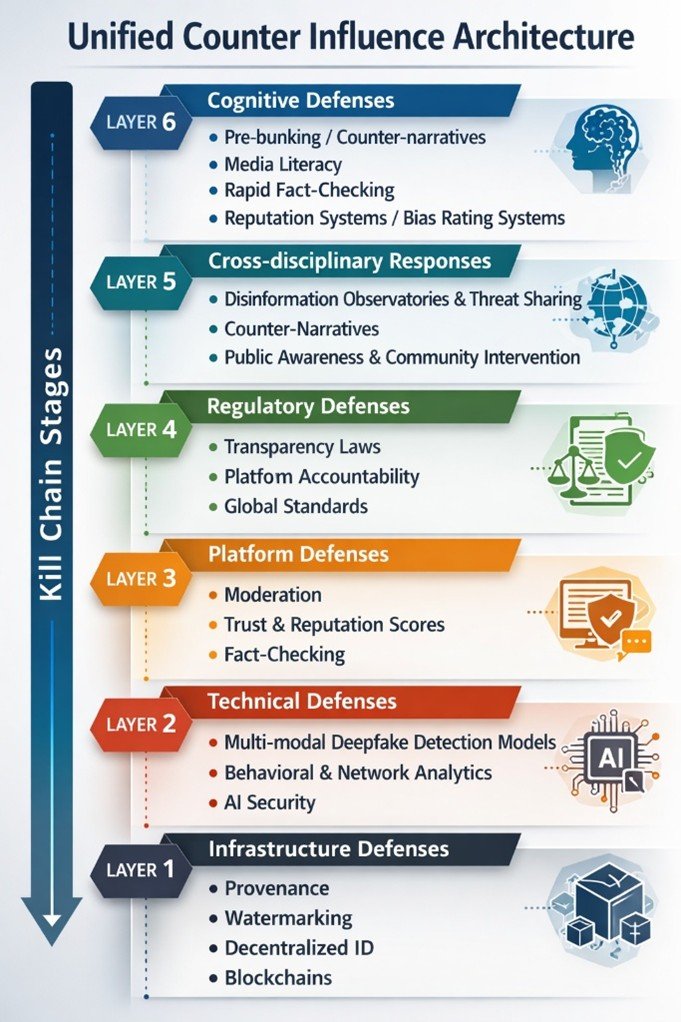

As shown in the table, provenance, AI‑security systems, and soft power approaches operationalize the kill chain: they turn theoretical models into automated, scalable defense mechanisms operating within a unified counter‑influence architecture – a holistic, multi‑layered defense system as depicted in Figure 3.

Figure 3 – Unified Counter Influence Architecture

A unified architecture needs to be at the foundation of a Global ecosystem that includes national and international regulatory and standards bodies, private organizations, fact-checkers, and countermeasure tool providers. Such a global ecosystem is important to maintain a consistent vigil against disinformation, maintain cross-checks on what constitutes inauthentic information, manage trust scores, and to share information regarding TTPs used for creating and spreading disinformation.

A unified architecture needs to be at the foundation of a Global ecosystem that includes national and international regulatory and standards bodies, private organizations, fact-checkers, and countermeasure tool providers. Such a global ecosystem is important to maintain a consistent vigil against disinformation, maintain cross-checks on what constitutes inauthentic information, manage trust scores, and to share information regarding TTPs used for creating and spreading disinformation.

What’s Next?

Journalism is always the art of the incomplete. You get bits and pieces. Anthony Shadid

Despite the best countermeasure technology, processes, and people, disinformation will still persist and even thrive behind AI generative forces. The problem is that you can have authentic information that is taken out of context to produce disinformation. Just a small bit of disinformation, mixed with pieces of authentic information, can sway human perception. You can also lose authentic context around the bits and pieces of information that different channels provide resulting in foggy critical analysis and decision-making. Therefore, to make a “truth ecosystem” truly effective, one must have the ability to provably reconstruct and evaluate the context of what, when, who, where, how, and why. Provenance and the other technical countermeasure tools can generally provide the what, when, who and where, and sometimes how. But getting the why is beyond what provenance or any of these other tools provide. Meanwhile, the why, who, and what of a person’s belief system is what the adversary is trying to influence.

A personalized AI filter may help provide the cognitive defenses needed to evaluate information context and provide “objective why” information through. Similar to a spam filter or a phishing filter, such a personalized AI filter would work with global fact-checking sources, and trust / reputation systems, while also providing the technical countermeasures for assessing the authenticity of images, video, and audio.

This concludes Part 2 of this two-part article. Let me know of any emerging ways you know about to combat disinformation as well as your views on this topic. And thanks to my subscribers and visitors to my site for checking out ActiveCyber.net! Please give us your feedback because we’d love to know some topics you’d like to hear about in the area of active cyber defenses, artificial intelligence, authenticity, quantum cryptography, risk assessment and modeling, autonomous security, digital forensics, securing OT / IIoT and IoT systems, Augmented Reality, or other emerging technology topics. Also, email chrisdaly@activecyber.net if you’re interested in interviewing or advertising with us at Active Cyber™.