4/13/2026

As I was writing this article, I was reminded of my teen years when I read Isaac Asimov’s Foundation Trilogy and became fascinated by the theory of psychohistory – a fictional science developed by fictional mathematician Hari Seldon that was a prominent backdrop to the story. It combines mathematics, sociology, and statistics to predict the behavior of large populations. The trilogy is essentially about modeling human belief and behavior at a macro scale—decades before modern AI – and how subtle influences (psychological, informational) can shape civilizations. Today, we are seeing the emergence of belief systems – ways to simulate and influence societal outcomes based on underlying and individualized human dynamics – modeling on a micro scale, which are based on real sciences and theories and hold great potential to do good or do tremendous harm. Will there be a Second Foundation to secretly guide humanity on the path to good? Or do we need to create explicit safeguards for these belief systems to ensure humanity doesn’t go off the rails? What is your view? Read the article below to find out more and send me your feedback.

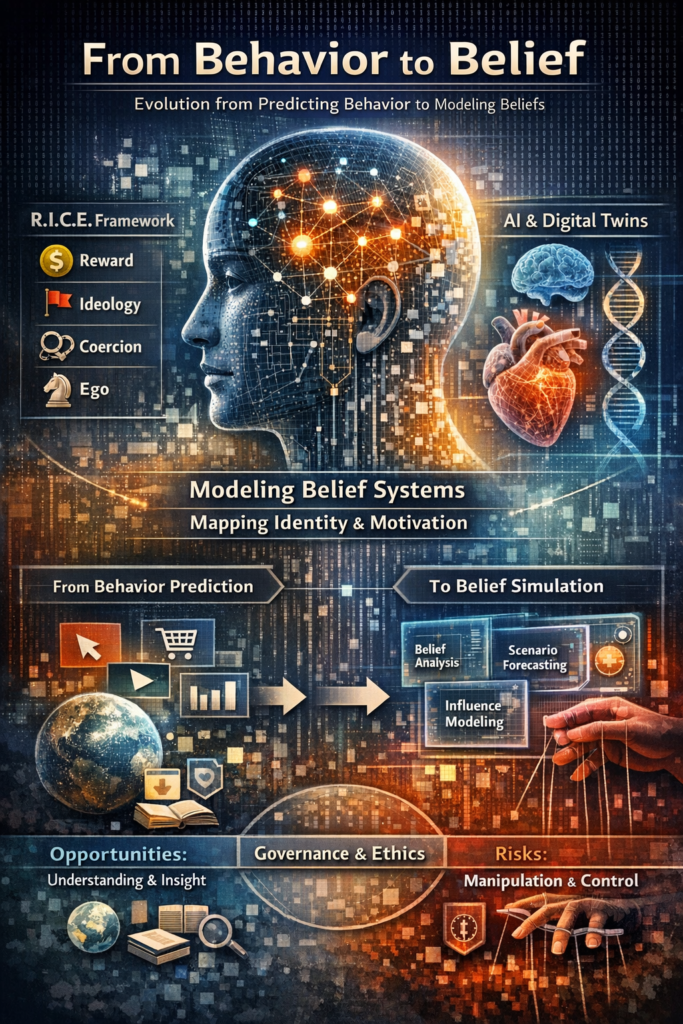

In intelligence work understanding why someone believes or acts is often more valuable than knowing what they believe. The Central Intelligence Agency formalized this insight through their research in cognitive science resulting in the RICE framework – Reward, Ideology, Coercion, and Ego – a model designed to map the motivational architecture of human behavior.

In intelligence work understanding why someone believes or acts is often more valuable than knowing what they believe. The Central Intelligence Agency formalized this insight through their research in cognitive science resulting in the RICE framework – Reward, Ideology, Coercion, and Ego – a model designed to map the motivational architecture of human behavior.

Digital twins originated in engineering: virtual replicas of physical systems used for prediction and optimization. Programs like DARPA’s VITAL initiative demonstrate how far this paradigm has advanced—creating high-fidelity simulations of the human cardiovascular system to predict medical outcomes in real time.

Now cognitive science, digital twins, and artificial intelligence are converging and evolving into something far more profound — a computational mirror of belief itself. By combining frameworks like RICE with advanced modeling techniques and active inference, the resulting systems are beginning to approximate something once thought uniquely human: the structured, evolving architecture of belief.

As digital systems evolve from predicting behavior to modeling belief itself, a new class of infrastructure is emerging—one that demands not only technical understanding, but governance, oversight, and public accountability.

The Shift from Behavior to Belief

For the past two decades, the dominant paradigm in digital technology has been behavioral prediction. Platforms learned to anticipate what users would click, purchase, or watch, optimizing engagement through increasingly sophisticated machine learning systems.

This paradigm is now approaching a threshold.

The next generation of systems will not merely predict behavior—they will model the beliefs, identities, and cognitive processes that generate behavior. These systems, which can be described as “belief twins,” represent a structural shift in the relationship between individuals and information environments.

Unlike traditional data profiles, a belief twin is not a record of what a person has done. It is a dynamic model of how a person interprets reality, how they update their beliefs, and under what conditions those beliefs might change. The implications are not only technological. They are epistemic, societal, and ultimately political.

Being able to forecast how a particular person will respond to a stimulus has clear value in UX, marketing, and social sciences. Examples include:

- Missing-data imputation: Fill in skipped survey items using the twin’s predictions.

- Shorter surveys: Ask respondents only a subset of questions, then infer the rest via the twin.

- Hard-to-reach participants: Use data from one-time interviews to build twins that stand in for groups that are expensive or impractical to recruit repeatedly and follow over time.

- Journey orchestration: Anticipate user reactions to different touchpoints and tailor experiences accordingly.

- Usability issues: Preemptively identify usability hurdles or emotional reactions to interface changes.

Hypothetically, it’s possible that digital twins may produce more realistic results in each of these examples than synthetic users because they’re based on the nuanced complexity of a single real individual, instead of the aggregated, averaged data representing a group.

RICE Reimagined: Encoding Motivation into Machines

At a deeper level, belief-system digital twins are operationalizing something strikingly similar to the CIA’s RICE model. Each RICE component maps naturally onto computational constructs:

- Reward → Utility functions and reinforcement signals

- Ideology → Core values modules and belief graphs

- Coercion → Constraint modeling, risk sensitivity, and aversion states

- Ego → Identity models, status preferences, and self-consistency drives

By encoding these motivational drivers, AI systems can simulate not just decisions, but the reasons behind them. This is a fundamental shift: from modeling behavior to modeling intentionality.

The emergence of belief twins is not the result of a single breakthrough, but of convergence across multiple domains: large-scale data collection, advances in machine learning, and growing insight into human cognition. A fully realized system would likely be composed of several integrated layers.

At its foundation lies a data layer, built from behavioral traces, language patterns, social networks, and contextual signals. These inputs are already captured at scale by major platforms. What changes is not the existence of the data, but its integration into a unified model of cognition.

Above this sits a representation layer, where raw data is transformed into structured belief systems. Here, beliefs are encoded as nodes within a network, connected by relationships such as support, contradiction, or dependency. These networks are embedded within high-dimensional vector spaces, allowing systems to detect nuance, similarity, and latent structure.

What distinguishes a belief twin from existing systems, however, is the identity layer—the component that models how individuals see themselves and their place within social groups. Identity acts as a filter through which all information is interpreted. Without it, systems can predict preferences; with it, they can anticipate resistance, reinterpretation, and selective acceptance of information.

The system becomes fully dynamic through a belief evolution engine, drawing on principles associated with active inference, a framework developed in part by Karl Friston. Within this model, individuals continuously update beliefs based on the gap between expectation and experience, while also seeking information that minimizes uncertainty.

In computational form, this translates into systems that simulate how beliefs change over time—incorporating memory decay, emotional weighting, and reinforcement loops.

Finally, a simulation interface allows operators—or automated processes—to run counterfactual scenarios: testing how an individual might respond to specific information, identifying which beliefs are most malleable, and forecasting how attitudes may evolve under different conditions.

Taken together, these layers form not just a model of behavior, but a model of belief itself.

Challenges of Belief Twins

Digital twin implementation for belief systems extends beyond traditional engineering and industrial applications, entering deeply human-centric, abstract, and ethically sensitive terrain. The primary challenges involve:

- Conceptualizing and formalizing beliefs as digitally representable entities.

- Ensuring data quality, privacy, and ethical integrity.

- Managing technical complexity, scalability, and dynamic variability.

- Incorporating human oversight and interdisciplinary collaboration for validation and trustworthy predictions.

Successfully addressing these challenges requires an interdisciplinary, ethically informed framework that balances technical rigor with cognitive, social, and cultural sensitivity.

1. Conceptual and Ontological Challenges

- Abstractness of Belief Systems: Unlike physical entities, beliefs are subjective, context-dependent, and often non-quantifiable. Representing them digitally requires translating qualitative attitudes, values, norms, and cognitive biases into measurable parameters – a nontrivial and often lossy process.

- Dynamic and Nonlinear Interactions: Belief systems evolve through social interactions, cultural norms, and personal experiences. These nonlinear dynamics are harder to model compared to linear or physically constrained processes in conventional DTs.

- No Clear Physical Twin: Traditional DTs rely on a tangible counterpart for calibration. For belief systems, defining a “physical twin” is ambiguous – one may use behavioral data, survey responses, or digital footprints as proxies, but these proxies are inherently partial and ethically sensitive.

2. Data Acquisition and Quality Challenges

- Subjectivity and Variability: Belief-related data vary across individuals, populations, and cultures. Standardizing data to reflect reliable behavior or attitudes is difficult.

- Sparse or Noisy Data: Observational and self-reported data about beliefs can be inconsistent, biased, or incomplete, resulting in poor quality inputs for the digital twin.

- Temporal Evolution: Beliefs can shift rapidly due to events, social pressures, or exposure to information. Capturing this temporal variability requires continual data updates and adaptive models.

3. Technical Challenges

- Modeling Complexity: Capturing the cognitive, social, and cultural dimensions of belief systems necessitates hybrid modeling approaches (agent-based models, probabilistic graphical models, cognitive architectures) which are computationally intensive.

- Integration Across Domains: Belief systems interact with social networks, institutions, media, and environments. Integrating heterogeneous datasets (social media activity, surveys, psychological assessments) into a unified DT framework presents significant interoperability issues.

- Scalability and Real-time Simulation: Scaling to entire populations or online communities while maintaining granular fidelity is technically demanding.

4. Ethical and Privacy Concerns

- Consent and Data Sensitivity: Beliefs are deeply personal. Obtaining informed consent at scale and ensuring anonymity is a core challenge.

- Bias, Manipulation, and Harm Risk: DTs of beliefs could be misused for psychographic targeting, social engineering, or political influence, raising serious ethical concerns.

- Cultural and Normative Relativism: Imposing universal modeling standards risks misrepresenting local or minority belief systems, leading to misinterpretations or unintended consequences.

5. Human-in-the-Loop and Validation Challenges

- Verification Difficulty: Unlike a physical twin, validating a digital twin of a belief system is subjective. Feedback loops are often probabilistic rather than exact.

- Human Interpretation Dependence: Users must interpret simulations cautiously; misinterpretations could undermine trust or lead to incorrect policy/organizational decisions.

Frameworks for Addressing These Challenges

While these challenges are formidable, emerging strategies can mitigate some of them:

- Use hybrid cognitive-social models that integrate probabilistic reasoning, agent-based simulations, and networked dynamics.

- Implement privacy-preserving architectures (differential privacy, synthetic data generation).

- Adopt incremental prototyping using small, representative communities before scaling.

- Maintain human-in-the-loop feedback to validate predictions, interpret results contextually, and ensure ethical compliance.

Creation and management of these frameworks require interdisciplinary approaches and demands collaboration between computational scientists, psychologists, sociologists, and ethicists, which is logistically and academically complex.

Institutional Trajectories: Partial Systems, Converging Capabilities

No single organization currently operates a complete belief twin system. However, multiple sectors are developing its constituent components.

Large technology firms such as Meta, Google, and OpenAI possess the computational infrastructure, data pipelines, and machine learning expertise required to construct large portions of the architecture. Their systems already encode user preferences and behavioral tendencies at scale.

Defense and research organizations – including DARPA, Parallax Advanced Research, and the University of Kentucky – are advancing formal models of collective behavior, decision-making, and information dynamics. These efforts are often framed in terms of national security, disinformation resilience, and strategic forecasting.

Under DARPA initiatives like MAGICS, researchers from Parallax Advanced Research and the University of Kentucky are moving beyond physical systems toward modeling complex human behavior with a goal of spurring development of rigorous methods to understand and predict human behavior with accuracy and nuance.

The Parallax approach incorporates:

- Cognitive variability between individuals

- Feedback loops and “belief attractors”

- Nonlinear evolution of attitudes.

According to Dr. Othalia Larue, Co-PI:

“Most current models overlook the breadth of cognitive and behavioral differences between individuals. Our system addresses this gap by modeling evolving cognitive states and external influences to predict changes at the individual level. By accounting for variability in how people form attitudes and integrating cognitive dynamics, the system produces richer, more accurate predictions of human outcomes.”

A slightly different approach is reported by Ishanu Chattopadhyay of the University of Kentucky:

“The model searches for the hidden organization tying together millions of sparse observations: the latent geometry of belief space, the couplings between ideas, and the constraints governing how societies transition between regimes. The resulting digital twins capture the emergent physics of opinion systems: stable belief configurations, metastable regimes, and the transitions between them.”

The mathematical concept of cocycles also offers a compelling structure for understanding how individual and collective beliefs and opinions evolve over time. This approach not only enhances the understanding of dynamic social systems but also provides a framework for interventions aimed at guiding belief changes in desirable directions.

One constant across these different approaches – they all attempt to map the dynamics of belief itself.

Meanwhile, the commercial sector is witnessing the emergence of what might be termed “belief intelligence” firms. In the aftermath of the Cambridge Analytica controversy, psychographic targeting has not disappeared—it has evolved. Startups in behavioral analytics and personal AI systems are experimenting with increasingly granular models of user psychology.

Platforms like TrueU illustrate how belief intelligence works in practice. Instead of simply storing user data, these systems construct a multi-dimensional model of a person’s worldview, capturing:

- Core values

- Political and civic beliefs

- Cognitive style and mindset

- Social and relational patterns

- Sense of agency and identity

These models are not static. They evolve through:

- Memory architectures with relevance scoring

- Decay algorithms that forget outdated beliefs

- Contextual injection, surfacing the “right” beliefs at the right time

The result is a digital twin that does not just remember what you said—it predicts how you will think.

What is currently missing is not capability, but integration—particularly the persistent modeling of identity over time

Cognitive Vulnerabilities and Systemic Leverage

The effectiveness of belief twin systems derives from their alignment with well-documented properties of human cognition.

Research in psychology demonstrates that individuals engage in identity-protective cognition, rejecting information that threatens their sense of self. This dynamic, explored by scholars such as Daniel Schacter, means that belief change is rarely driven by direct confrontation.

Instead, beliefs are reinforced through confirmation bias and belief perseverance, where individuals preferentially accept information that aligns with existing views. Contradictions are often resolved through reinterpretation rather than revision, a process associated with cognitive dissonance reduction.

Equally important is the role of social proof. Individuals are highly responsive to perceived group norms – particularly those of groups with which they identify. The perception that “people like me believe this” can be more influential than empirical evidence.

Finally, memory itself is not static. It is reconstructive. Each act of recall reshapes the narrative, creating opportunities for gradual influence that operates below the threshold of conscious awareness.

A system that models these dynamics does not need to persuade directly. It can instead shape the conditions under which beliefs evolve.

Capability Trajectory and Structural Limits

My capability assessment of belief twins distinguishes between near-term capabilities and long-term projections.

In the short term (2–3 years), systems will continue to excel at behavioral prediction while offering only limited insight into belief structures. Segmentation will improve, but individual-level modeling will remain coarse.

Within a medium-term horizon (5–7 years), more refined belief profiles are likely to emerge, incorporating elements of identity and enabling early forms of persuasion optimization.

Over a longer horizon (10+ years), high-fidelity belief simulations may become feasible for individuals with extensive digital footprints. Systems could reliably forecast responses to information and identify pathways for incremental belief change.

However, several constraints are likely to persist.

Human cognition is inherently inconsistent; individuals hold contradictory beliefs and shift positions across contexts. There is no objective “ground truth” for belief against which models can be calibrated. Social systems exhibit emergent properties that resist prediction. These factors impose limits on precision – but not necessarily on influence.

Policy Concern: Epistemic Asymmetry

The central policy issue raised by belief twin systems is not simply manipulation, but epistemic asymmetry—a structural imbalance in who has the capacity to model, predict, and influence belief.

Historically, influence has been visible and contestable: advertising campaigns, political messaging, public discourse. In contrast, belief twin systems operate at the level of individualized cognition, often without explicit signals of persuasion.

This enables three forms of capability with significant societal implications, and which also make them risky.

If a system can accurately model an individual’s motivations using RICE-like structures, it can also:

- Tailor persuasion with unprecedented precision – precision persuasion allows messaging to be tailored not just to demographics, but to the internal logic of an individual’s belief system

- Exploit psychological vulnerabilities – belief trajectory shaping shifts the focus from immediate persuasion to long-term guidance, subtly influencing how beliefs develop over time.

- Influence decisions without explicit awareness – artificial consensus creates environments in which perceived agreement is manufactured, reinforcing beliefs through simulated social validation.

This raises profound ethical questions that researchers and designers must not overlook:

- Who owns your belief model?

- How should consent be managed when reusing a participant’s data to create a long-lasting proxy?

- What are the risks of misrepresentation, especially if a twin is used in contexts beyond its original intent?

- Would users agree to having a digital version of themselves created to fill out surveys or predict further behavior?

- Can a digital twin act on your behalf?

- Where is the boundary between assistance and manipulation?

The concern is not hypothetical. As these systems mature, they may become central tools in marketing, politics, and social influence. These capabilities challenge existing regulatory frameworks, which are largely designed around transparency in messaging rather than transparency in cognitive modeling.

Toward Governance and Safeguards

If belief twin systems represent a new layer of infrastructure, they require corresponding governance mechanisms.

Several policy directions emerge:

- Transparency requirements for systems that model or infer belief states, particularly when used in political or informational contexts

- Data rights frameworks that extend beyond behavioral data to include inferred cognitive and identity attributes

- Auditability standards for algorithms that optimize persuasion or influence

- Restrictions on synthetic consensus generation, including disclosure requirements for simulated social environments

- Public-interest research into defensive tools, such as systems that allow individuals to inspect or counter-model their own belief representations

These measures are not straightforward. They require balancing innovation, privacy, free expression, and security. However, the absence of governance would leave the development of belief twin systems entirely in the hands of actors with the greatest technical and economic power.

A Philosophical Inflection Point

At its deepest level, the emergence of belief twins signals a transition in what is being modeled and what reasons it is being modeled. This creates a recursive dynamic: models of belief can influence belief, which in turn shapes perception, decision-making, and collective outcomes. That creates recursive power:

Whoever models belief…

can shape belief…

which shapes reality.

In such a system, influence is no longer episodic. It becomes continuous, embedded, and adaptive. If this vision is realized, a system could:

- Predict: Your opinions before you form them

- Identify: Your “belief breaking points”

- Simulate: Your reaction to future events

- Influence: Without explicit persuasion

At that point, it’s not just AI. It’s a model of your epistemic self.

Conclusion: The Need for Deliberate Design

Belief twin systems are not inevitable in their final form, but their trajectory is clear. The convergence of data, machine learning, and cognitive science is producing systems that increasingly approximate how individuals think, not just what they do.

This development presents a choice.

These systems can be designed as tools for understanding—enhancing education, improving communication, and strengthening resilience against disinformation. Or they can become instruments of asymmetric influence, operating below the level of awareness and outside the reach of existing safeguards.

The distinction will not be determined by technology alone. It will be determined by policy, governance, and the degree to which societies recognize that the modeling of belief is not merely a technical capability, but a form of power. Understanding that shift is the first step toward shaping it.

Is your epistemic anchor strong enough to withstand manipulation by an AI belief influencer? What is your view on belief twins? Can we design adequate governance frameworks to safeguard against the abuse of belief twins? And thanks to my subscribers and visitors to my site for checking out ActiveCyber.net! Please give us your feedback because we’d love to know some topics you’d like to hear about in the area of active cyber defenses, artificial intelligence, authenticity, quantum cryptography, risk assessment and modeling, autonomous security, digital forensics, securing OT / IIoT and IoT systems, Augmented Reality, or other emerging technology topics. Also, email chrisdaly@activecyber.net if you’re interested in interviewing or advertising with us at Active Cyber™.